The Dark Side of Skool Communities: Banned Without Notice (and Why It Matters)

Table Of Content

- TL;DR

- What happened (facts first)

- The part that matters: knowledge can disappear overnight

- I wasn’t just lurking (receipts of contribution)

- The asymmetry nobody wants to say out loud

- The hidden cost: you can “crank up” and still be disposable

- What “fair moderation” looks like (minimum viable due process)

- If you’re a builder: treat communities like platform risk

- For the renegades (and the banned ones): come join us

- What I’d like (simple, reasonable)

- CTA

- Sources / Further Reading

The Dark Side of Skool Communities: Banned Without Notice (and Why It Matters)

TL;DR

- I logged in and found I was banned from AI Automation Agency Hub on Skool with no clear warning or explanation.

- What’s worse than the ban is the knowledge loss: after being banned, it appeared that posts/replies I made—where I helped other members—were no longer visible.

- This isn’t a hit piece on any one person. It’s a case study in community power asymmetry: members create value, but access (and visibility) can be revoked instantly.

- Communities at scale need minimum due process: notice, reason, and a real appeals path.

- If you’re building a business in someone else’s community, treat it as platform risk and design a backup plan (owned audience + owned knowledge).

Figure 0: “Banned without notice” — the core problem: you can build value on rented land, then lose access instantly.

Figure 0: “Banned without notice” — the core problem: you can build value on rented land, then lose access instantly.

What happened (facts first)

I logged into my Skool account and saw a “BANNED” status for the community:

- Community: AI Automation Agency Hub

- Platform: Skool

- Community URL shown: https://skool.com/learn-ai

- Scale shown on the page: 289.7k members, 861 online, 8 admins

Figure 1: My account showing I’m banned from “AI Automation Agency Hub” on Skool.

Figure 1: My account showing I’m banned from “AI Automation Agency Hub” on Skool.

I’m not writing this to litigate anyone’s motives.

I’m writing it because something about this should make any builder pause:

In a knowledge community, the most valuable asset isn’t the brand. It’s the accumulated answers.

And in my case, it looks like some of those answers are now missing.

The part that matters: knowledge can disappear overnight

A ban is personal.

But the real blast radius is communal.

After I was banned, it appeared that content I had posted—questions answered, frameworks, links, and references—was no longer visible.

That creates collateral damage:

- People who bookmarked threads lose them.

- New members can’t find prior answers.

- The community pays the cost again (re-asking, re-answering, re-litigating the same basics).

If you’ve ever run a team, you already know the analogue:

Deleting institutional knowledge is like firing your best operator and burning the SOPs.

The ban is one action.

The knowledge deletion is a second-order effect.

I wasn’t just lurking (receipts of contribution)

To be clear: I wasn’t passively consuming.

I was participating, answering, and sharing useful resources. Here are examples included as receipts from my own saved notes.

Example 1: helping members understand AI outbound compliance

One of the most useful threads I interacted with was around AI outbound compliance and consent. A member summarized notes from a compliance call and highlighted that pre-written express consent becomes important starting Jan 27, 2025, and that burden of proof is on the sender.

This is exactly the kind of “builders helping builders” knowledge that communities are supposed to protect.

Example 2: answering a member looking for a serious partner

A member posted that they were overwhelmed building an AI automation agency solo and wanted a serious partner.

My response (abridged):

- Map the full process on paper first (keep it to 5–7 steps)

- Find the one handoff that creates most delays

- Automate repetitive data-entry; keep humans on strategy

That’s not “engagement farming.” That’s practical help.

Example 3: compliance + policy notes (EU AI Act)

I also posted a heads-up for anyone shipping AI into the EU, pointing to official resources and suggesting a lightweight compliance workflow:

- Maintain a simple schema (models/versions, categories, interaction logs)

- Automate a weekly “policy diff” so changes don’t surprise you

- Store trace bundles for multi-year retention

Again: not legal advice—just operational guidance for builders.

Example 4: connecting people to solutions

In multiple threads (lead gen automation, hiring requests), I shared relevant Loom demos and direct guidance.

When someone contributes consistently and the community later loses that content, the loss is shared.

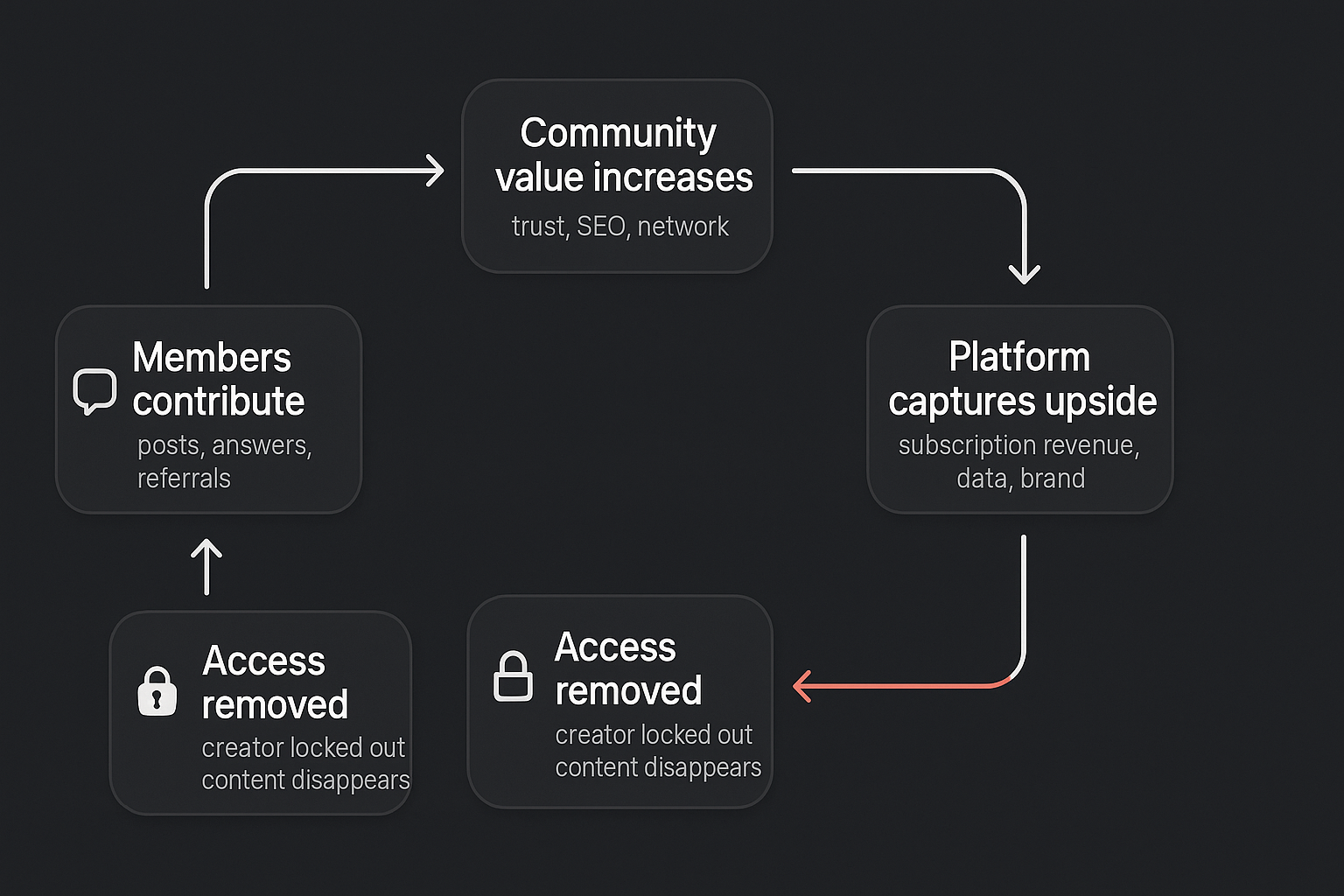

The asymmetry nobody wants to say out loud

In communities, we talk about “belonging.”

But the actual structure is closer to:

Figure 2: The community value loop: members contribute → value grows → platform captures upside → enforcement event → access removed.

Figure 2: The community value loop: members contribute → value grows → platform captures upside → enforcement event → access removed.

This isn’t inherently evil.

Moderation is necessary. Communities at scale get spammy, scammy, and chaotic.

But when the system has zero built-in due process, you’re not in a community.

You’re on rented land.

The hidden cost: you can “crank up” and still be disposable

Here’s the cognitive dissonance:

I was Level 5.

In Skool’s design, levels are supposed to represent contribution.

So what does it mean when you can be removed instantly—with no explanation—and your contributions seemingly vanish with you?

It means the level system is an incentive layer.

It’s not governance.

And incentives without governance produce exactly this outcome:

- People chase points

- People pour time into posts

- The system benefits from the labor

- But the labor has no guarantees

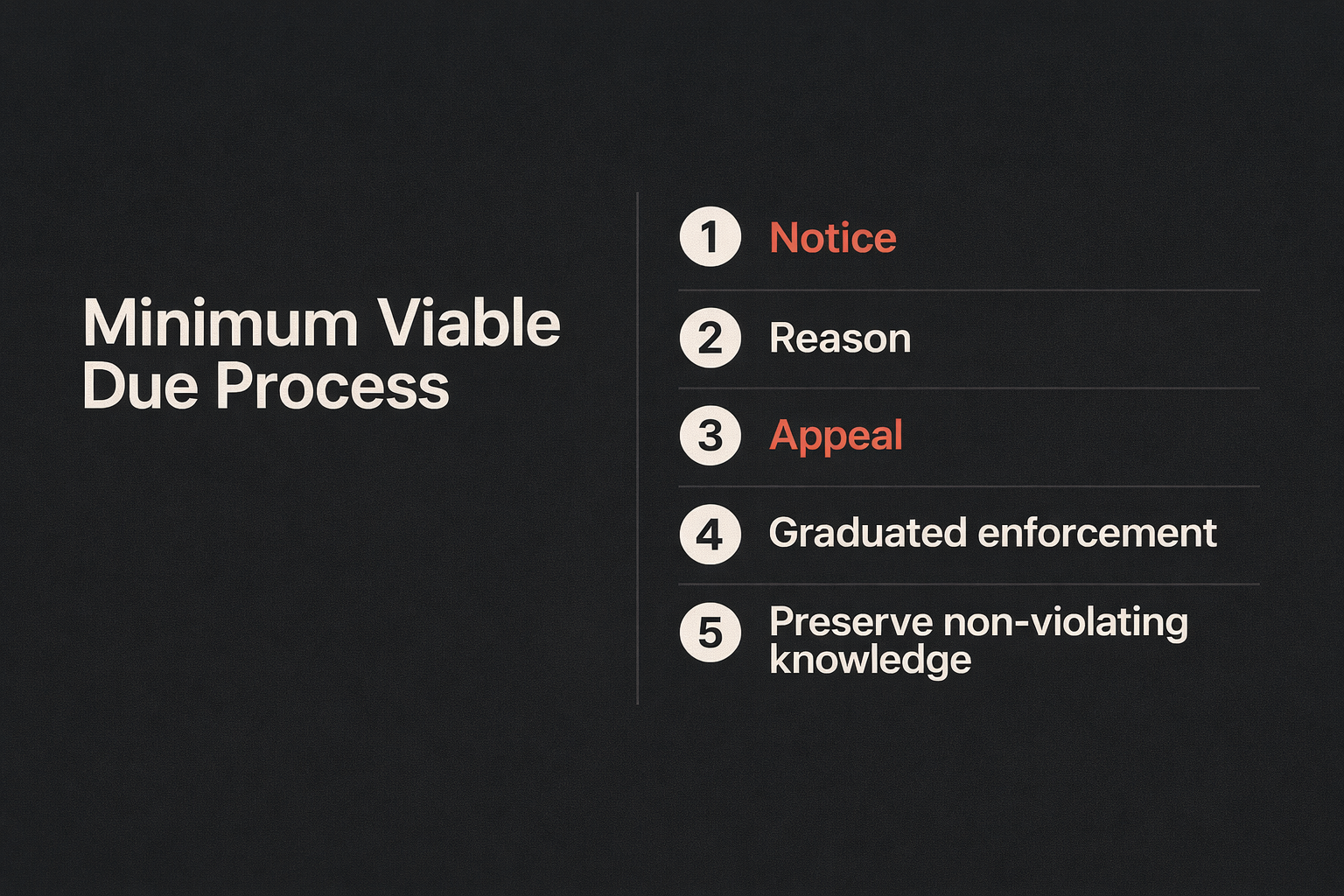

What “fair moderation” looks like (minimum viable due process)

I’m not asking for perfect.

I’m asking for basics—standards that already exist in broader content moderation discussions.

A good public reference point is the Santa Clara Principles on Transparency and Accountability in Content Moderation, which emphasizes concepts like notice and appeals.

Here’s what “minimum viable due process” can look like for a community:

Figure 3: “Minimum Viable Due Process” — a basic trust loop for community enforcement.

Figure 3: “Minimum Viable Due Process” — a basic trust loop for community enforcement.

- Notice

- A clear message: what rule was triggered, what content/action caused it, and what happens next.

- Reason (even if brief)

- “Spam,” “harassment,” “self-promotion,” “impersonation,” etc.

- You don’t need a novel, but you do need a category.

- Appeal path

- A way to request review that doesn’t rely on guessing who to DM.

- Graduated enforcement (when appropriate)

- Warning → temporary restriction → ban.

- Content continuity choices

- If someone’s removed, consider leaving high-value, non-violating informational posts intact.

If you run a serious community, these practices aren’t “nice.”

They’re trust infrastructure.

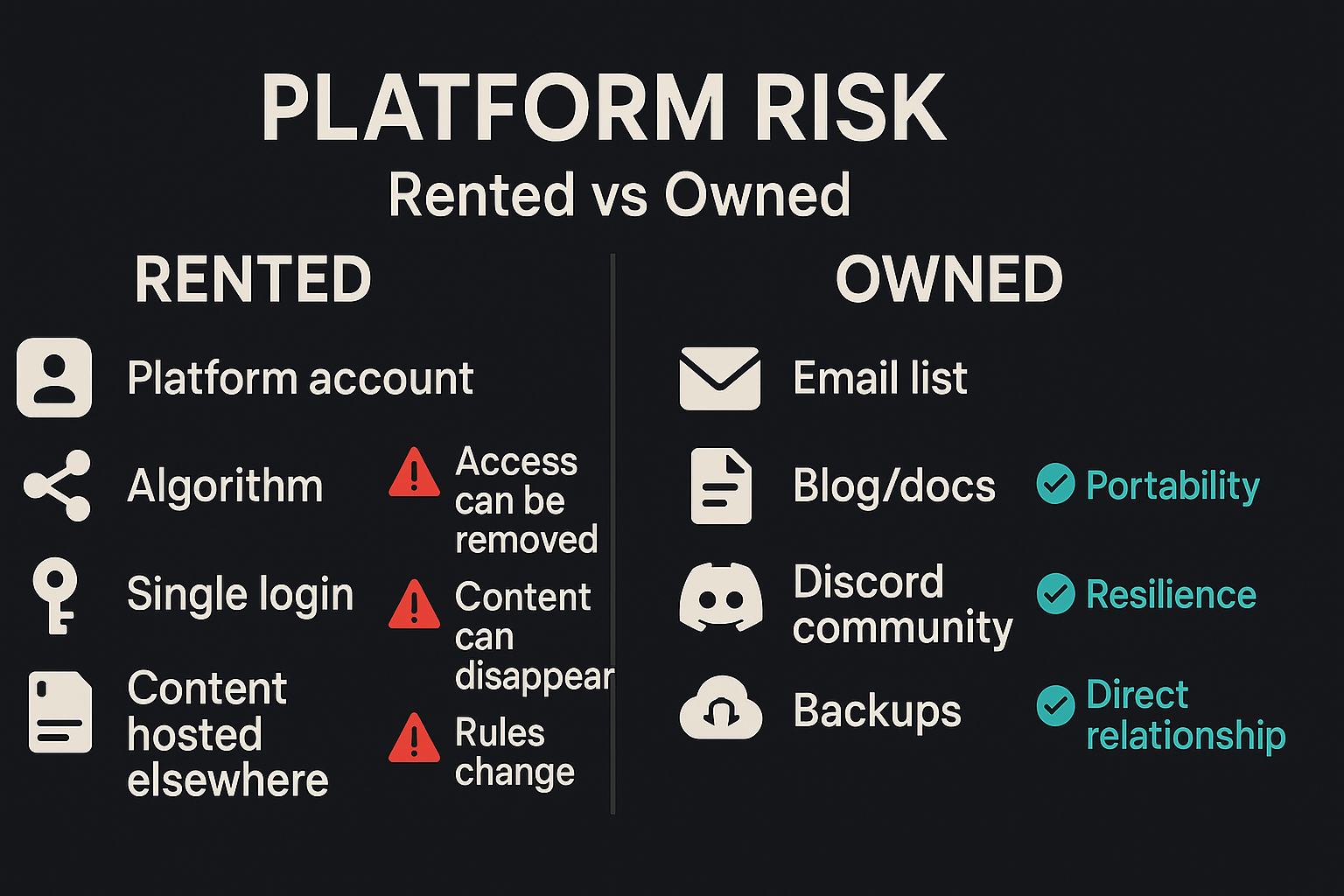

If you’re a builder: treat communities like platform risk

If a channel is not owned by you, it is a dependency.

And dependencies can fail.

Figure 4: “Rented vs Owned” — the simplest way to think about platform risk.

Figure 4: “Rented vs Owned” — the simplest way to think about platform risk.

Here are practical steps to reduce the blast radius:

1) Keep your best answers somewhere you control

Write the canonical version of your best posts in a doc, a public blog, or a knowledge base.

Then share the link.

Now, if you lose access, your work survives.

2) Build an “owned audience” layer

Even a simple email list changes the power dynamic.

If your only relationship with your audience is inside a platform, you don’t really have an audience.

3) Avoid single points of failure

Communities can be part of your distribution.

They shouldn’t be your entire identity.

For the renegades (and the banned ones): come join us

If you’ve been quietly burned by a community ban, a shadowban, a deleted post, or a “we don’t owe you an explanation” moment—welcome to the club.

I’m building a space for builders who:

- share real playbooks

- don’t hide the ball

- don’t treat contributors as disposable

Figure 5: Discord invite promo for builders who got burned by platform moderation.

Figure 5: Discord invite promo for builders who got burned by platform moderation.

Join the Discord: https://discord.gg/jJnek28ka4

What I’d like (simple, reasonable)

I’m open to the possibility that I missed context.

If I violated a rule, tell me what it was.

If I didn’t, reverse the decision.

And either way, I’d argue for one policy change:

Add a basic “notice + reason + appeal” loop as a default standard.

CTA

If you’re building automation systems (or any serious product) and you want to design workflows that don’t collapse when a single platform changes its mind, that’s exactly what we do at Poly.

- Learn more: https://www.poly186.com

Sources / Further Reading

- Skool Support - Skool’s support entry point (includes help@skool.com).

- Skool Help Center - Product documentation and community admin topics.

- The Santa Clara Principles on Transparency and Accountability in Content Moderation - Notice and appeals as baseline expectations.

- PartnerHero: The best practices for moderation appeals - Practical guidance on building appeals flows.

- Stream: Guide to Transparent Content Moderation (Appeals & Statements of Reasons) - Overview of transparent moderation systems.